How ChatGPT Works: The Model Behind The Bot

How ChatGPT Works: The Model Behind The Bot

https://towardsdatascience.com/how-chatgpt-works-the-models-behind-the-bot-1ce5fca96286

Mirror, Mirror on the Wall: Working with AI

It’s important when working with the current generation of AI systems (principally Large Language Models or LLMs) to approach them with caution and circumspection. The reason for this is essentially human fallibility – both by the end-user and by the system itself.

The computer science behind generative AI is moving so fast that innovations emerge every month. How researchers choose to use them will dictate their, and our, future.

The system I’m currently using – ChatGPT – is genuinely revolutionary in terms of how helpful it is for programming assistance – it has saved me hours and hours of time working out some approaches I can develop to programming problems. The underpinning technology is, in fact, several years old, but the way it has been implemented and its availability is what sets it apart (expect many more of these sorts of systems over the next few years).

But it has also hallucinated things that simply don’t exist – and presented them in a convincing matter-of-fact way:

Q: How can I import a multispectral geotiff into Unreal Engine 5 and break it into different layers for selected wavebands all correctly geolocated?

ChatGPT: To import a multispectral GeoTIFF into Unreal Engine 5 and break it into different layers for selected wavebands, you will need to use a plugin or script that is capable of handling multispectral imagery. One example is the “GeoTIFF Import” plugin, which is available on the Unreal Engine Marketplace. This plugin allows you to import a GeoTIFF file and separate it into different layers for each band.

Once you’ve imported the GeoTIFF, you can use the “Landscape” tool to create a terrain that corresponds to the image. You can then use the “Landscape Layer Blend” node in the material editor to control the blending between the different layers of the image.

To correctly geolocate the image, you will need to use the “World Aligned Blocking” feature, which allows you to align the image with the terrain using GPS coordinates. In the Unreal Engine, you can use the “World Settings” panel to set the GPS coordinates for your image.

Alternatively, you can use Python scripts that are designed to handle the import of multispectral GeoTIFFs and separate them into different layers, such as rasterio and gdal libraries. You can then use these layers as textures in the Unreal Engine to create a 3D representation of the multispectral data with accurate geolocation.

I’ve highlighted the plausible falsehoods – for instance, there is no GeoTIFF import plugin for Unreal Engine in the UE Marketplace. There are off-the-shelf solutions for this such as Landscaping, Cesium and Terraform Pro (amongst others out there on the interwebs) – and it would be great if UE could directly import GeoTIFFs via a native plugin. But there isn’t – instead ChatGPT hallucinates a solution. It sounds plausible – just like using a ‘World Aligned Blocking’ feature, or simply using the ‘World Settings’ panel to set GPS coordinates.

Essentially the system is confabulating. Large Language Models are pejoratively referred to as ‘stochastic parrots’ – they act basically as ‘token prediction’ machines, where there is no awareness of on-going context within a given text generated by the system, and certainly no concept of inference or common-sense. Long passages of text or programming are simply realisations of the likelihood of these generated token streams on a larger scale than individual words**, articulated within the interpretive reception of the ‘user’ that may perceive ‘seeming’ coherence:

We say seemingly coherent because coherence is in fact in the eye of the beholder. Our human understanding of coherence derives from our ability to recognize interlocutors’ beliefs [30, 31] and intentions [23, 33] within context [32]. That is, human language use takes place between individuals who share common ground and are mutually aware of that sharing (and its extent), who have communicative intents which they use language to convey, and who model each others’ mental states as they communicate. As such, human communication relies on the interpretation of implicit meaning conveyed between individuals….

Text generated by an LM is not grounded in communicative intent, any model of the world, or any model of the reader’s state of mind. It can’t have been, because the training data never included sharing thoughts with a listener, nor does the machine have the ability to do that. This can seem counter-intuitive given the increasingly fluent qualities of automatically generated text, but we have to account for the fact that our perception of natural language text, regardless of how it was generated, is mediated by our own linguistic competence and our predisposition to interpret communicative acts as conveying coherent meaning and intent, whether or not they do [89, 140]. The problem is, if one side of the communication does not have meaning, then the comprehension of the implicit meaning is an illusion arising from our singular human understanding of language (independent of the model).

Nevertheless, even with these caveats, the system provides a valuable and useful distillation of a hugely broad-range of knowledge, and can present it to the end user in an actionable way. This has been demonstrated by my use of it in exploring approaches toward Python programming for the manipulation of GIS data. It has been a kind of dialogue – as it has provided useful suggestions, clarified the steps taken in the programming examples it has supplied, and helped me correct processes that do not work.

But it is not a dialogue with an agent – seeming more akin to a revealing mirror, or a complex echo, from which I can bounce back and forth ideas, attempting to discern a truth for my questions. This brings with it a variety of risks, depending upon the context and domain in which it is applied:

The fundamental problem is that GPT-3 learned about language from the Internet: Its massive training dataset included not just news articles, Wikipedia entries, and online books, but also every unsavory discussion on Reddit and other sites. From that morass of verbiage—both upstanding and unsavory—it drew 175 billion parameters that define its language. As Prabhu puts it: “These things it’s saying, they’re not coming out of a vacuum. It’s holding up a mirror.” Whatever GPT-3’s failings, it learned them from humans.

Academic research as well as popular media often depict both AGI and ASI as singular and monolithic AI systems, akin to super-intelligent, human individuals. However, intelligence is ubiquitous in natural systems—and generally looks very different from this. Physically complex, expressive systems, such as human beings, are uniquely capable of feats like explicit symbolic communication or mathematical reasoning. But these paradigmatic manifestations of intelligence exist along with, and emerge from, many simpler forms of intelligence found throughout the animal kingdom, as well as less overt forms of intelligence that pervade nature. (p.4)

…AGI and ASI will emerge from the interaction of intelligences networked into a hyper-spatial web or ecosystem of natural and artificial intelligence. We have proposed active inference as a technology uniquely suited to the collaborative design of an ecosystem of natural and synthetic sensemaking, in which humans are integral participants—what we call shared intelligence. The Bayesian mechanics of intelligent systems that follows from active inference led us to define intelligence operationally, as the accumulation of evidence for an agent’s generative model of their sensed world—also known as self-evidencing. (p.19)

The hottest new programming language is English

The hottest new programming language is English

— Andrej Karpathy (@karpathy) January 24, 2023

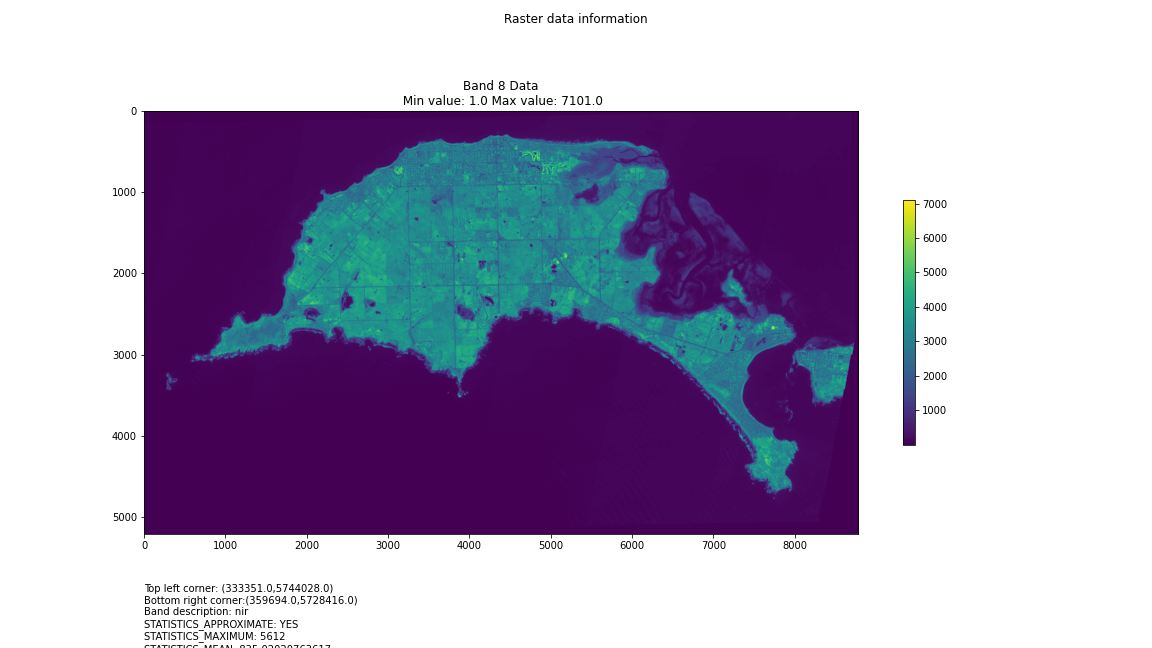

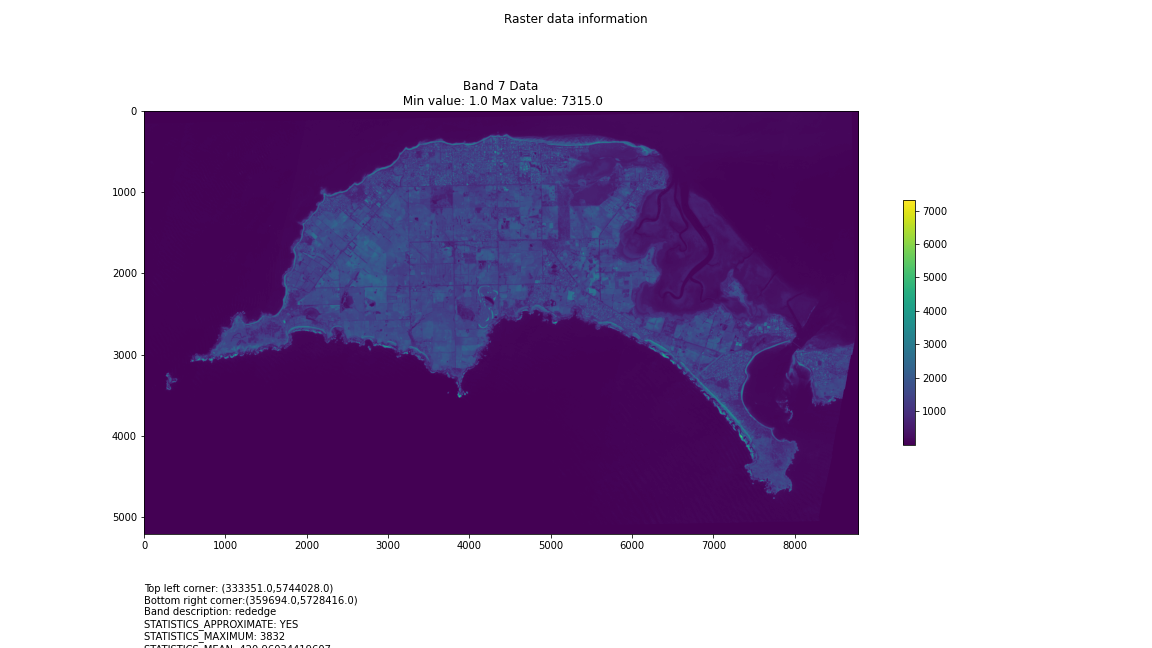

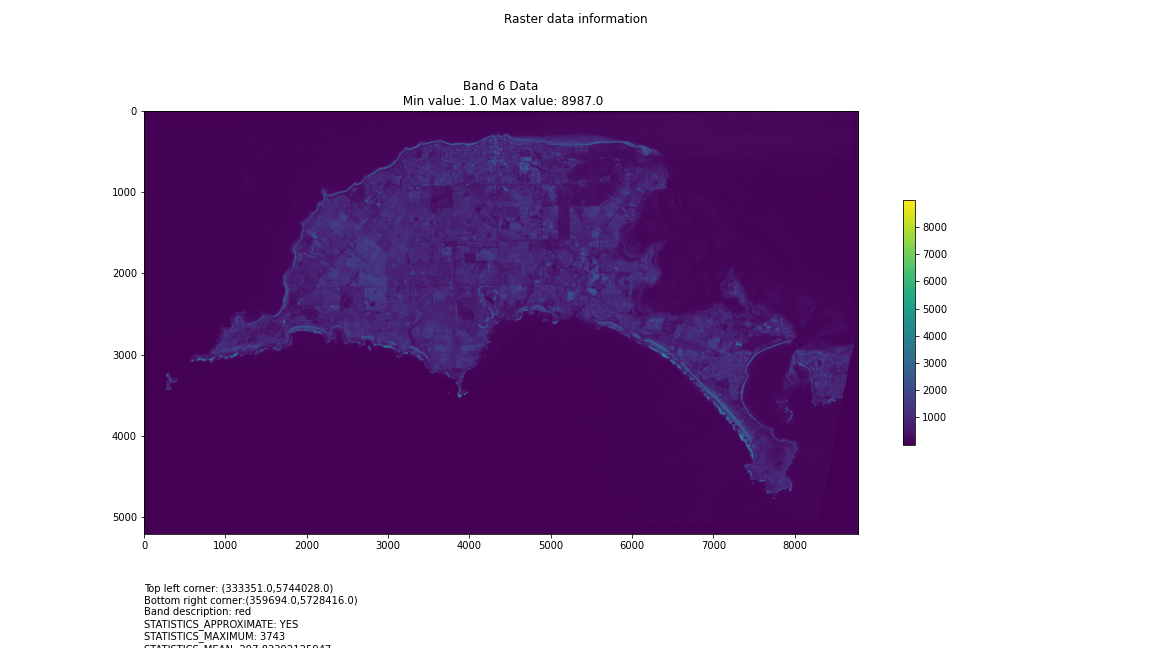

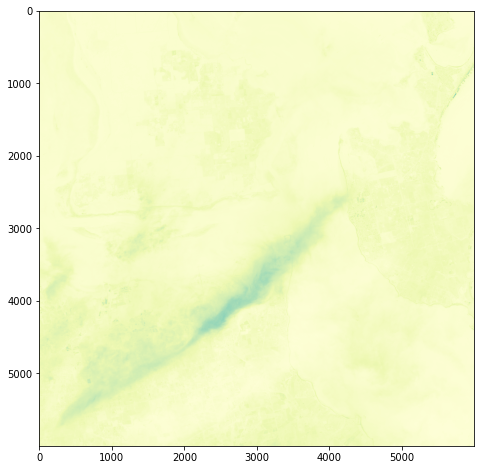

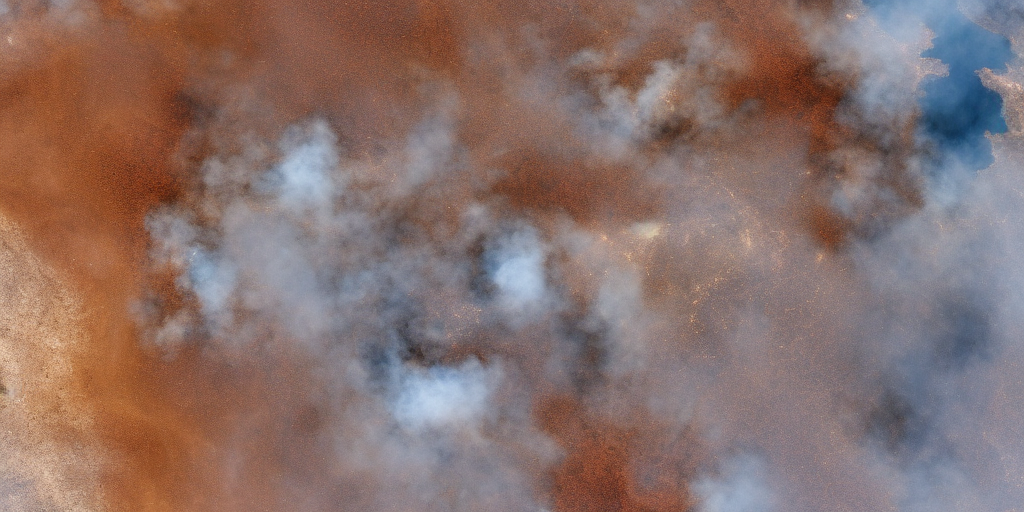

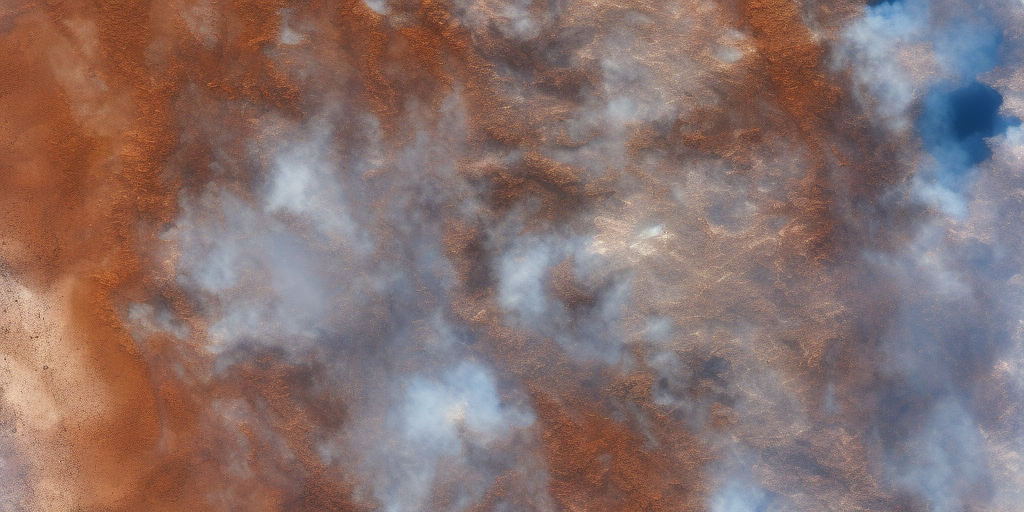

Visualising Philip Island

One objective (amongst many) that Chris and I have identified is to first start off looking at islands. The reason behind this is simple: an island presents a defined and constrained geographic area – not too big, not too small (depending on the island, of course) – and I’ve had a lot of recent experience working with island data on my recent Macquarie Island project with my colleague Dr Gina Moore. It provides some practical limits to the amount of data we have to work with, whilst engaging many of the technical issues one might have to address.

With this in mind, we’ve started looking a Philip Island (near Melbourne, Victoria) and Kangaroo Island (South Australia). Both fall under a number of satellite paths, from which we can isolate multispectral and hyperspectral data. I’ll add some details about these in a later post – as there are clearly issues around access and rights that need to be absorbed, explored and understood.

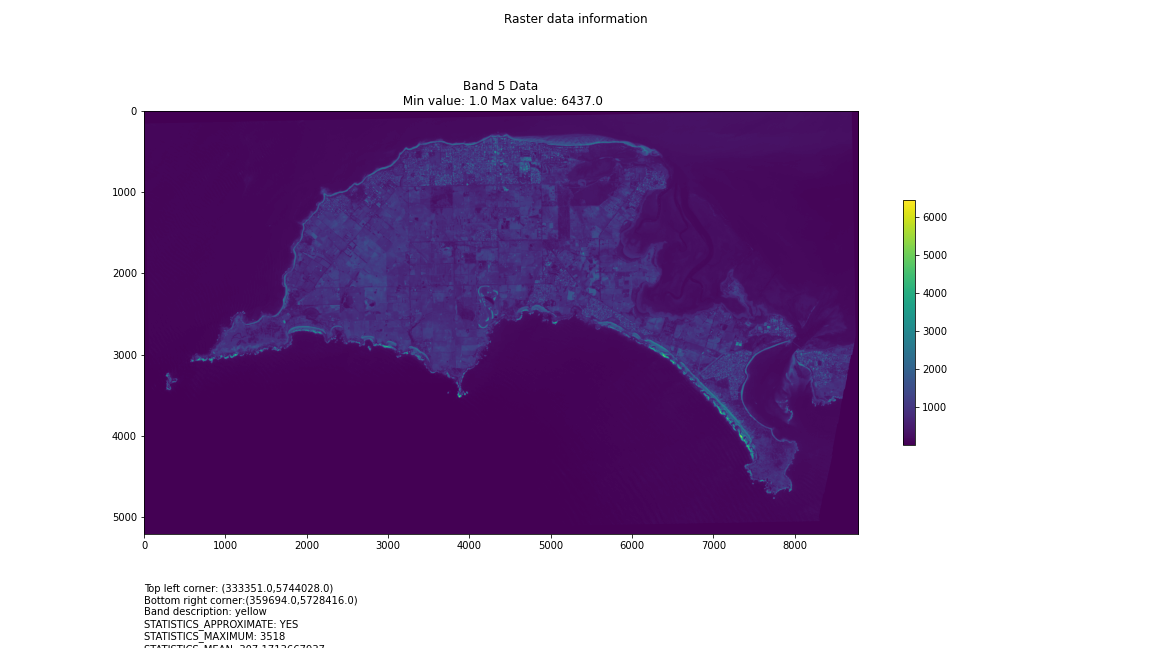

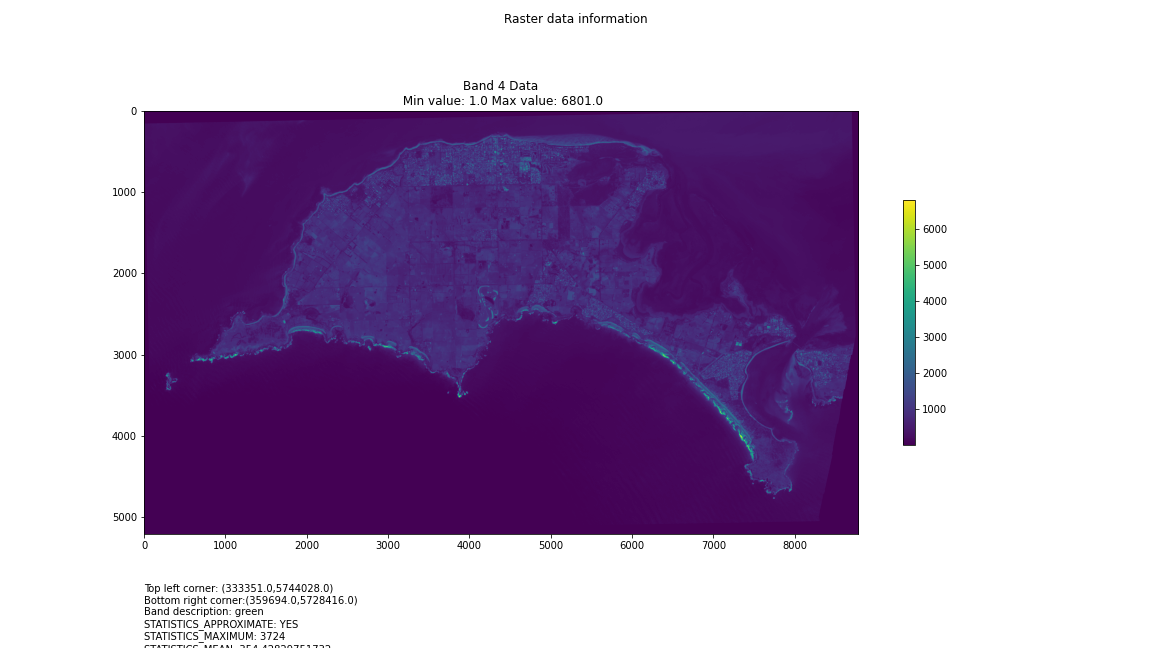

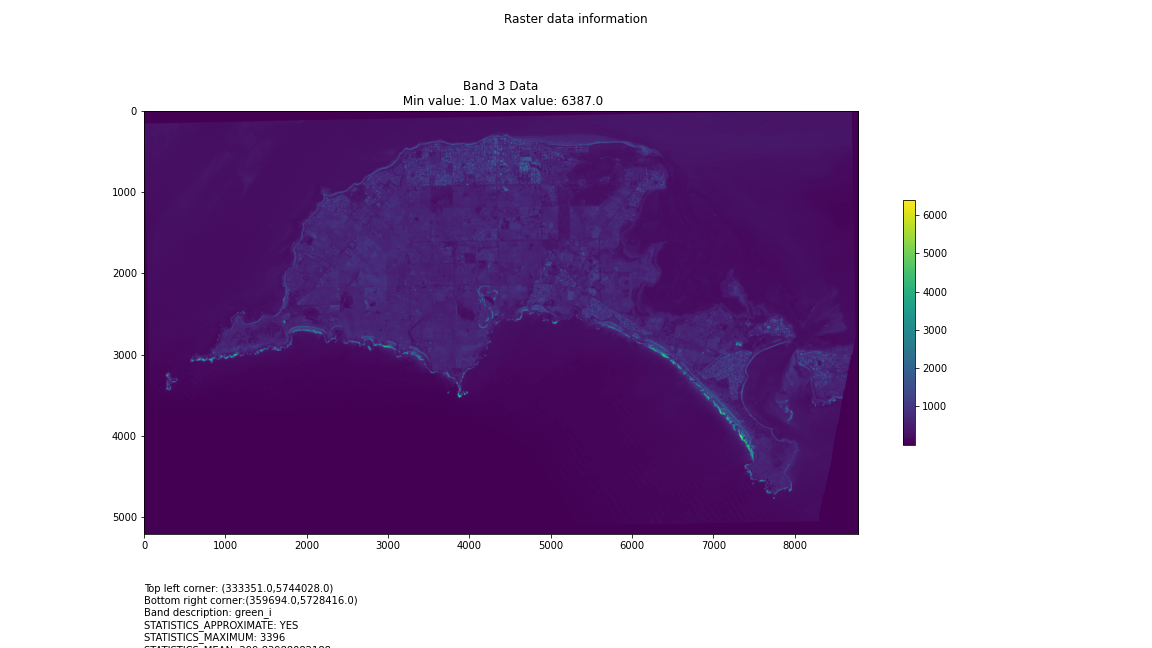

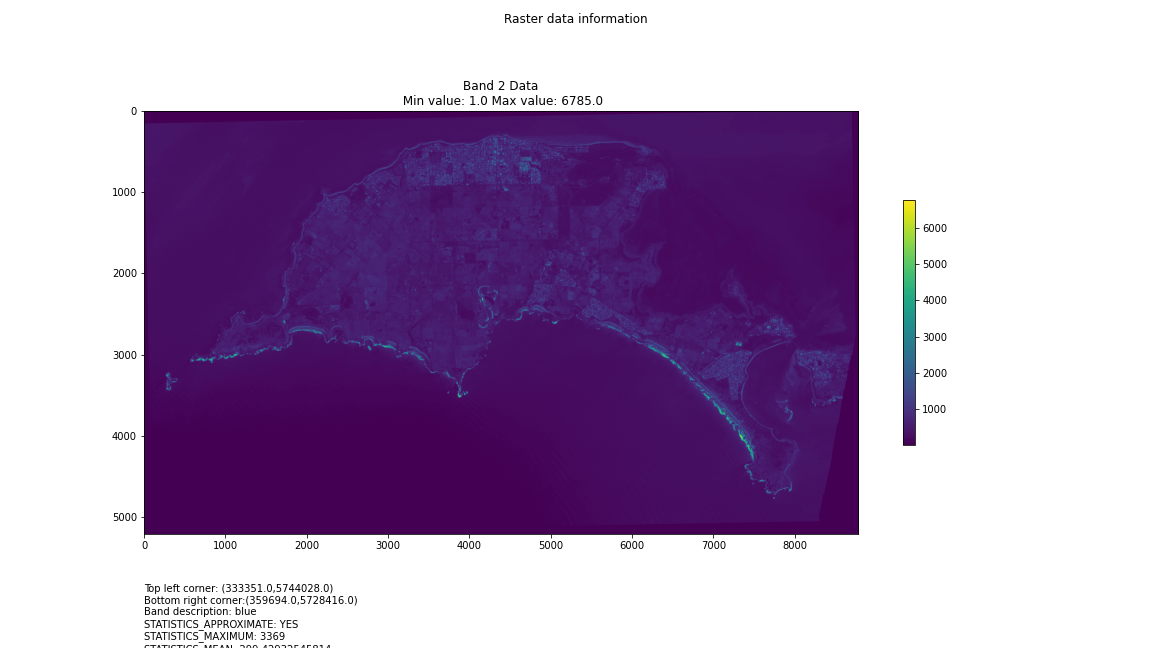

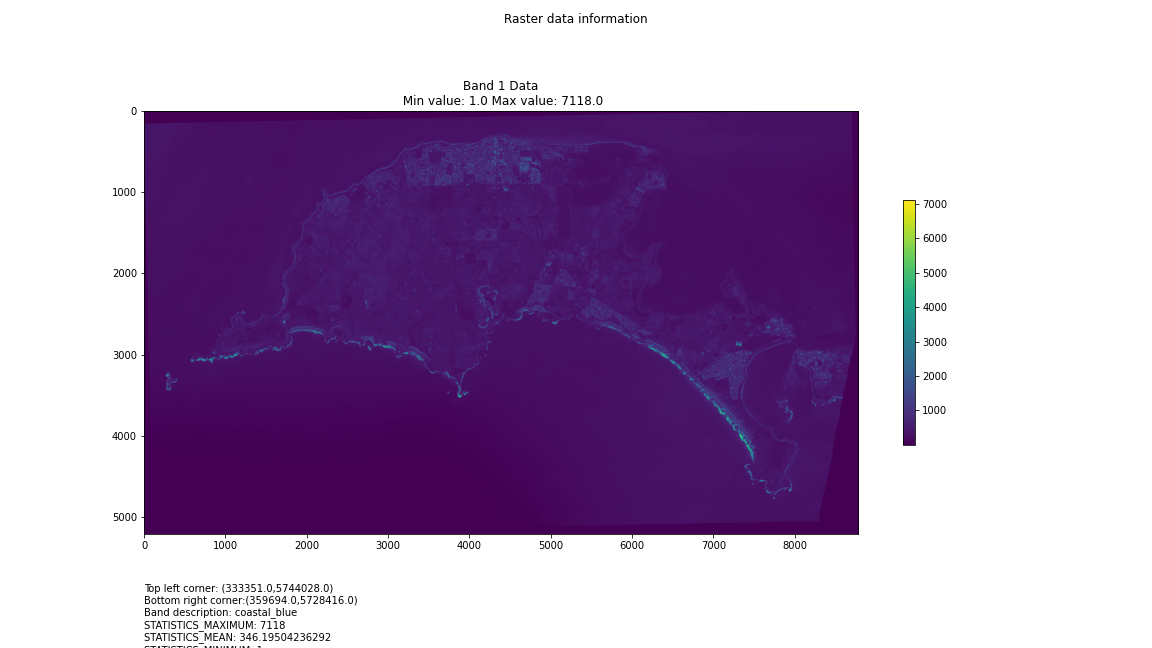

For a first attempt, here is a google colab script for looking at some Philip Island data from the XXX Satellite:

# Import necessary librariesimport osfrom osgeo import gdal, gdal_arrayfrom google.colab import driveimport numpy as npimport matplotlib.pyplot as plt# Mount Google Drivedrive.mount('/content/drive')#this will ask for permissions - you will need to login through your google account in a pop-up window# Open the multispectral GeoTIFF file#set the file path to the folder with the relevant data in it on your google drive (mount this first via the panel to the left of this one - it is called 'drive' and appears as a folder)file_path = '/content/drive/MyDrive/DATA/XXX_multispectral.tif'#set a variable for your path and the file you opensrc_ds = gdal.Open(file_path)#use gdal to get some characteristics of the data in the fileprint("Projection: ", src_ds.GetProjection()) # get projectionprint("Columns:", src_ds.RasterXSize) # number of columnsprint("Rows:", src_ds.RasterYSize) # number of rowsprint("Band count:", src_ds.RasterCount) # number of bandsprint("GeoTransform", src_ds.GetGeoTransform()) #affine transform# Use gdalinfo command to print and save information about the raster file - this is extracted from the geotiff itselfinfo = gdal.Info(file_path)print(info)if not os.path.exists("/content/drive/MyDrive/DATA/OUTPUT"): os.makedirs("/content/drive/MyDrive/DATA/OUTPUT")info_file = os.path.join("/content/drive/MyDrive/DATA/OUTPUT", "raster_info.txt")with open(info_file, "w") as f: f.write(info)# Retrieve the band count and band metadatadata_array = src_ds.GetRasterBand(1).ReadAsArray()data_array.shapeband_count = src_ds.RasterCount# Loop through each band and display in a matplotlib imagefor i in range(1, band_count+1): band = src_ds.GetRasterBand(i) minval, maxval = band.ComputeRasterMinMax() data_array = band.ReadAsArray() plt.figure(figsize=(16, 9)) plt.imshow(data_array, vmin=minval, vmax=maxval) plt.colorbar(anchor=(0, 0.3), shrink=0.5) plt.title("Band {} Data\n Min value: {} Max value: {}".format(i, minval, maxval)) plt.suptitle("Raster data information") band_description = band.GetDescription() metadata = band.GetMetadata_Dict() geotransform = src_ds.GetGeoTransform() top_left_x = geotransform[0] top_left_y = geotransform[3] w_e_pixel_res = geotransform[1] n_s_pixel_res = geotransform[5] x_size = src_ds.RasterXSize y_size = src_ds.RasterYSize bottom_right_x = top_left_x + (w_e_pixel_res * x_size) bottom_right_y = top_left_y + (n_s_pixel_res * y_size) coordinates = ["Top left corner: ({},{})".format(top_left_x,top_left_y),"Bottom right corner:({},{})".format(bottom_right_x,bottom_right_y)] if band_description: metadata_list = ["Band description: {}".format(band_description)] else: metadata_list = ["Band description is not available"] if metadata: metadata_list += ["{}: {}".format(k, v) for k, v in metadata.items()] else: metadata_list += ["Metadata is not available"] plt.annotate("\n".join(coordinates+metadata_list), (0,0), (0, -50), xycoords='axes fraction', textcoords='offset points', va='top') plt.savefig("/content/drive/MyDrive/DATA/OUTPUT/Band_{}_Data.png".format(i), vmin=minval, vmax=maxval) plt.show()

This works well enough for a plot – but it’s interesting (a debate) – whether it is best/easiest to use GDAL or the rasterio Mapbox wrapper. Tests will tell. And there is Pandas too. They all have pros and cons. Try it yourself and let us know,

I’m looking into ways of sharing the colabs more directly for those who are interested – that’s the whole point.

Visualising Satellite data using Google Colab

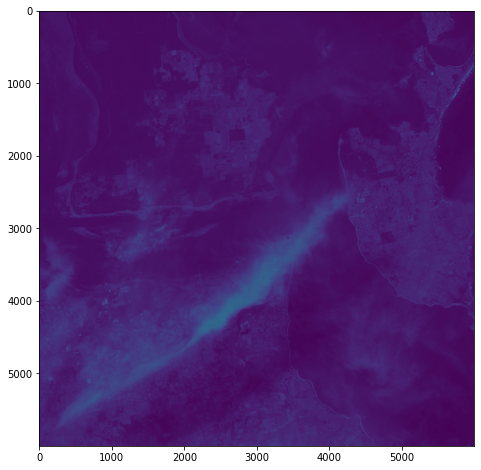

Having spent a few hours reading documentation and having an ongoing conversation with chatGPT, I’m getting the hang of the hdf5 file structure and can now visualise some multispectral data in Google Colab:

from google.colab import drive

drive.mount('/content/drive')

import h5py

import numpy as np

import matplotlib.pyplot as plt

# Get the HDF5 file from your Google Drive

file_id = '/content/drive/MyDrive/DATA/file_name.he5'

with h5py.File(file_id, "r") as f:

# List all groups

print("Keys: %s" % f.keys())

a_group_key = list(f.keys())[0]

# Get the data

data = list(f[a_group_key])

#This gives us some idea about the groups/keys in the hdf file and some idea about the datasets contained therein - but will become more detailed as we go along

# Open the HDF5 filewith h5py.File(file_id, 'r') as f:# Open the data field#currently this is hard-coded as I know from hdfView that this is the path I want to look at - but really we want to find this programmatically.data_field = f['/path_to/Data Fields/Cube']# Print the shape of the data fieldprint(f'Shape: {data_field.attrs}')print(f'Shape: {data_field.dtype}')print(f'Shape: {data_field.shape}')#This gives us some idea about the data cube we are examining - such as its attributes, data type and shape (typically rows and columns) - it'll print them to output# Open the HDF5 file

with h5py.File(file_id, 'r') as f:

# Open the data field

data_field = f['/path_to/Data Fields/Cube']

# Get the data and reshape it to 2D

data = np.array(data_field[:]).reshape(data_field.shape[0], data_field.shape[1])

# Scale the data to fit within an 800x800 pixels

data = np.uint8(np.interp(data, (data.min(), data.max()), (0, 255)))

# Create a figure with the specified size

fig = plt.figure(figsize=(8, 8))

# Add the data to the figure

plt.imshow(data, cmap='viridis')

# Display the figure

plt.show()

Next steps involve developing a way of iteratively traversing the hdf5 directory structure, so that I can identify relevant data fields within the file – they’re not explicitly identified as ‘image files’. This can be done using h5py functions. Another thing to explore is GDAL: once I’ve identified the correct data in geolocation fields, it should become possible to output geotiffs or UE-friendly png files with geolocation metadata.

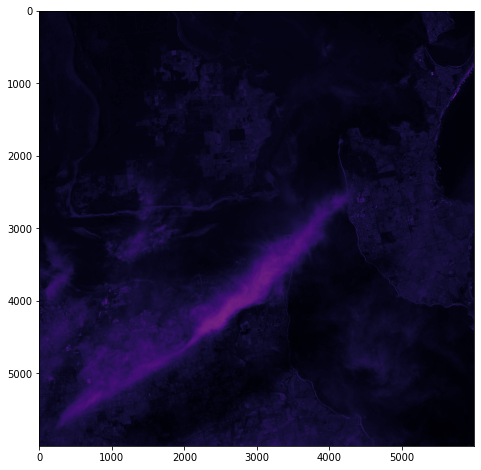

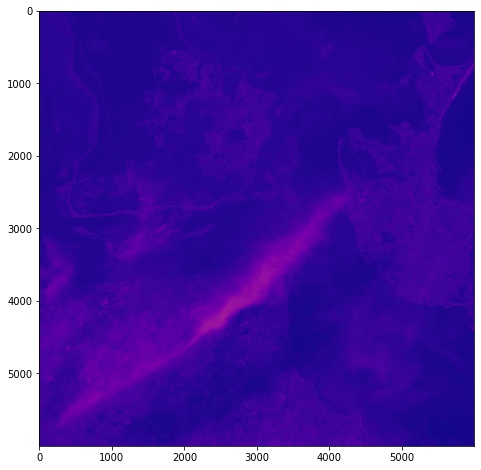

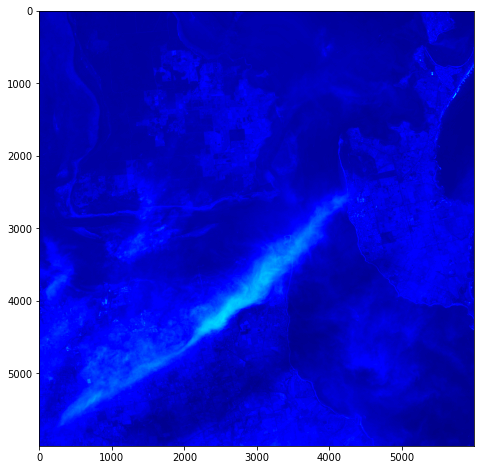

Here’s some other matplotlib colourmaps applied to the same dataset.

It’s all pretty crude at this point – just figuring out how this stuff might work.

gdal_translate

gdal_translate: Converts raster data between different formats. https://gdal.org/programs/gdal_translate.html

h5py

h5py: The h5py package is a Pythonic interface to the HDF5 binary data format – https://www.h5py.org

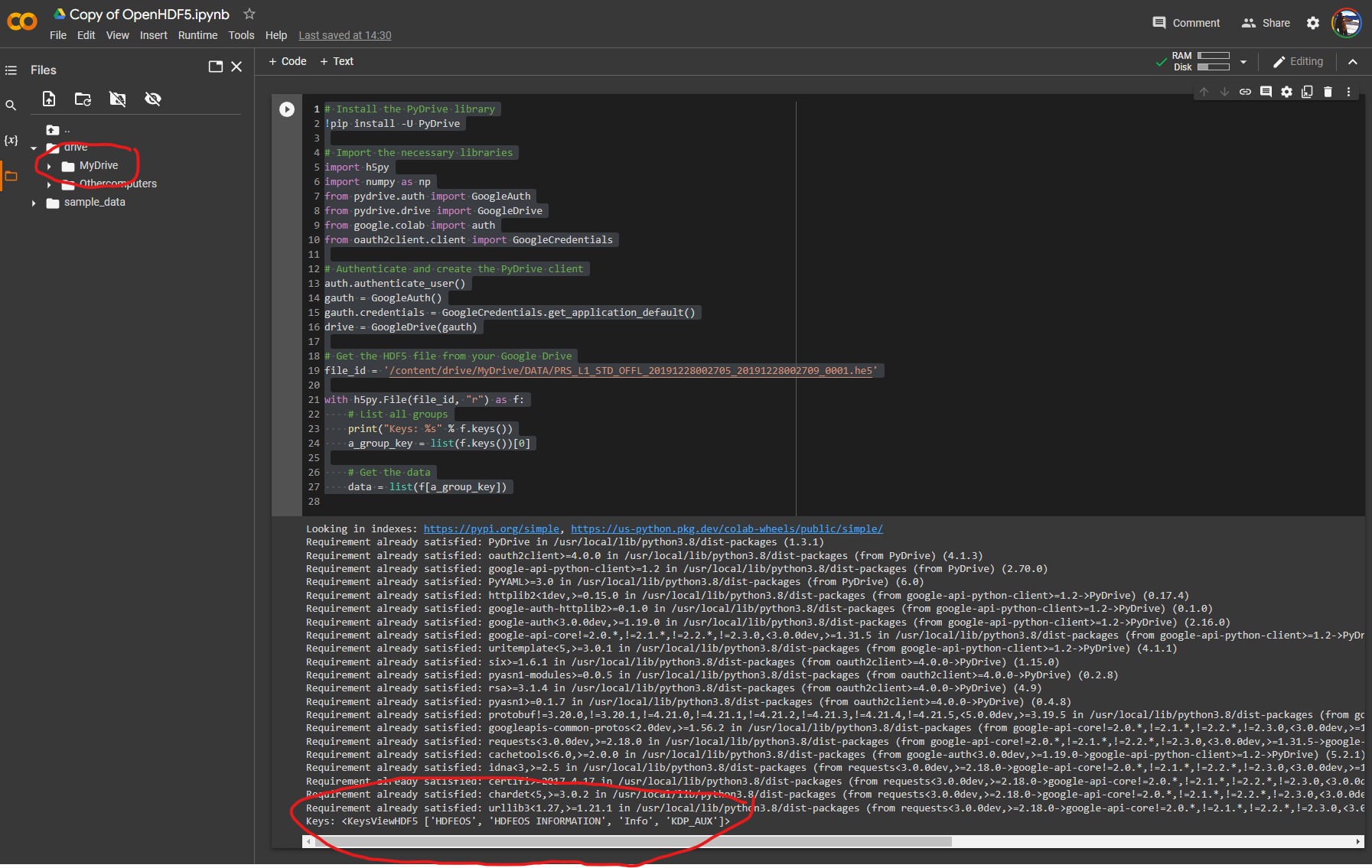

ChatGPT, Google Colab and Hyperspectral Data

It occurs to me that, rather than going to all the hassle of figuring out how to write all the code for colab myself, that I can use my chatGPT assistant to do it for me:

Q: write a script for google colab to extract images from an hdf5 file located upon my google drive

Chat GPT:

Here is an example script that demonstrates how to extract images from an HDF5 file located on your Google Drive using the h5py library in Python, and the PyDrive library to interact with Google Drive:

# Install the PyDrive library

!pip install -U PyDrive

# Import the necessary libraries

import h5py

import numpy as np

from pydrive.auth import GoogleAuth

from pydrive.drive import GoogleDrive

from google.colab import auth

from oauth2client.client import GoogleCredentials

# Authenticate and create the PyDrive client

auth.authenticate_user()

gauth = GoogleAuth()

gauth.credentials = GoogleCredentials.get_application_default()

drive = GoogleDrive(gauth)

# Get the HDF5 file from your Google Drive

file_id = '<YOUR FILE ID>'

downloaded = drive.CreateFile({'id': file_id})

downloaded.GetContentFile('hyperspectral_image_data.hdf5')

# Open the HDF5 file

with h5py.File('hyperspectral_image_data.hdf5', 'r') as hdf5_file:

# Access the dataset containing the images

images = hdf5_file['images']

# Extract an image by its index

image = np.array(images[0])

print(image)

Make sure to replace <YOUR FILE ID> with the actual file ID of the HDF5 file on your Google Drive. You can find the file ID by opening the file in Google Drive and looking at the URL – the file ID is the string of letters and numbers after the last forward slash.

You can also use the gdal_translate command with the PyDrive library as well, just add the following lines of code to download the file and then use gdal_translate command to convert to tiff or geotiff

# Get the HDF5 file from your Google Drivefile_id = '<YOUR FILE ID>'downloaded = drive.CreateFile({'id': file_id})downloaded.GetContentFile('hyperspectral_image_data.hdf ')#Use gdal_translate!gdal_translate -of GTiff HDF5:"hyperspectral_image_data.hdf5"://dataset output.tifYou can then use any image processing library to open and read the image.

Lots of interesting things to unpack in this advice – and lots of errors as I expect to debug the code.

So, as a first step, let’s see if we can just get access to the file and some basic metadata.

# Install the PyDrive library!pip install -U PyDrive# Import the necessary librariesimport h5pyimport numpy as npfrom pydrive.auth import GoogleAuthfrom pydrive.drive import GoogleDrivefrom google.colab import authfrom oauth2client.client import GoogleCredentials# Authenticate and create the PyDrive clientauth.authenticate_user()gauth = GoogleAuth()gauth.credentials = GoogleCredentials.get_application_default()drive = GoogleDrive(gauth)# Get the HDF5 file from your Google Drivefile_id = '/content/drive/MyDrive/DATA/XXXXXXX.he5'with h5py.File(file_id, "r") as f: # List all groups print("Keys: %s" % f.keys()) a_group_key = list(f.keys())[0] # Get the data data = list(f[a_group_key])

Success! We can see the HDF keys returned below:

Keys: <KeysViewHDF5 ['HDFEOS', 'HDFEOS INFORMATION', 'Info', 'KDP_AUX']>

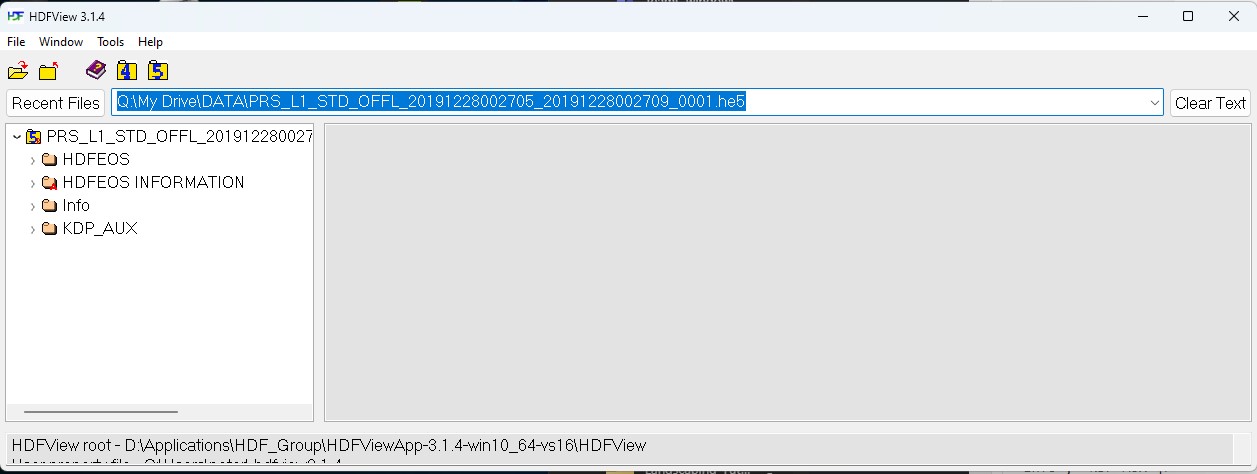

These match the top-level directory structure revealed by HDFView:

This is the first step in identifying the file contents, before we can drill down into the file structure to identify a whole bunch of parameters about the datacube that we can do interesting things with 🙂

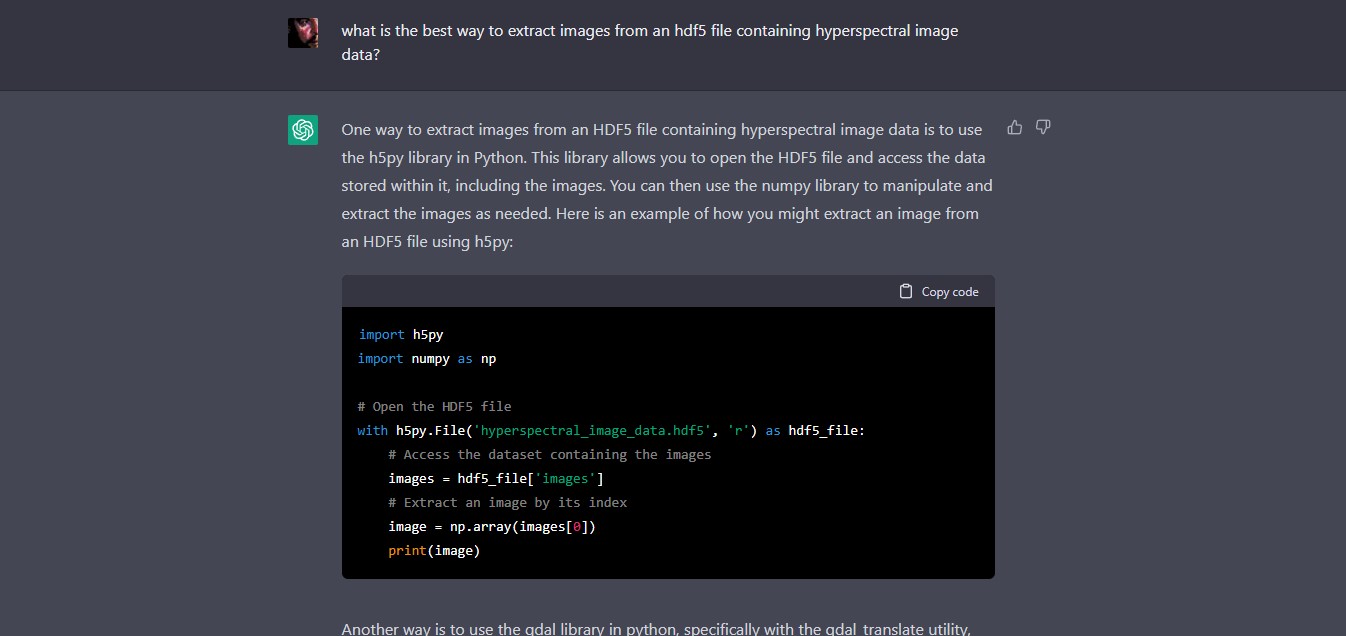

Working with ChatGPT & Hyperspectral Data

Thus far I have been playing around with HDFView to extract hdf5 image data – but I am very interested in working with advanced machine learning systems and developing processes to interface them with Unreal Engine and on-line data sources. It seems the most tractable way to do this is through the use of a Python API – which is pretty much the lingua franca of scientific computing.

As I am a neophyte with using hyperspectral image data, let’s use ChatGPT to find out what might be an ‘easy’ or ‘best’ way to access image data within an hdf5 file:

ChatGPT:

One way to extract images from an HDF5 file containing hyperspectral image data is to use the h5py library in Python. This library allows you to open the HDF5 file and access the data stored within it, including the images. You can then use the numpy library to manipulate and extract the images as needed. Here is an example of how you might extract an image from an HDF5 file using h5py:

import h5py

import numpy as np# Open the HDF5 filewith h5py.File(‘hyperspectral_image_data.hdf5’, ‘r’) as hdf5_file:

# Access the dataset containing the images

images = hdf5_file[‘images’]

# Extract an image by its index

image = np.array(images[0])

print(image)

Another way is to use the gdal library in python, specifically with the gdal_translate utility, which can convert HDF5 to other raster formats like tiff or geotiff, and then use any image processing library to open and read the image.

gdal_translate -of GTiff HDF5:"file.hdf5"://dataset output.tifIn this way you will be able to use any library of your preference to read and extract the images.

I have no idea currently whether this will work, so lets look up the relevant documentation:

- h5py: The h5py package is a Pythonic interface to the HDF5 binary data format – https://www.h5py.org

- gdal_translate: Converts raster data between different formats. https://gdal.org/programs/gdal_translate.html

Both look promising – and has saved me a heap of time looking for ways to do it!

The first outputs images as a numPy array – meaning that we can examine/export each image by its index – which would be useful for selecting for certain λ (wavelength)) values and conducting operations upon them.

The second uses GDAL (Geospatial Data Abstraction Library), which provides powerful utilities for the translation of geospatial metadata – enabling correct geolocation of the hyperspectral image data, for instance.

So perhaps a combination of both will be useful as we proceed.

But of course, any code generated by chatGPT or OpenAI Codex or other AI assistants must be taken with several grains of salt. For instance – a recent study by MIT shows that users may write more insecure code when working with an AI code assistant (https://doi.org/10.48550/arXiv.2211.03622). Perhaps there are whole API calls and phrases that are hallucinated? I simply don’t know at this stage.

So – my next step will be to fire up a python environment – probably Google Colab or Anaconda and see what happens.

A nice overview of Codex here:

OpenAI Codex Live Demo

No Description